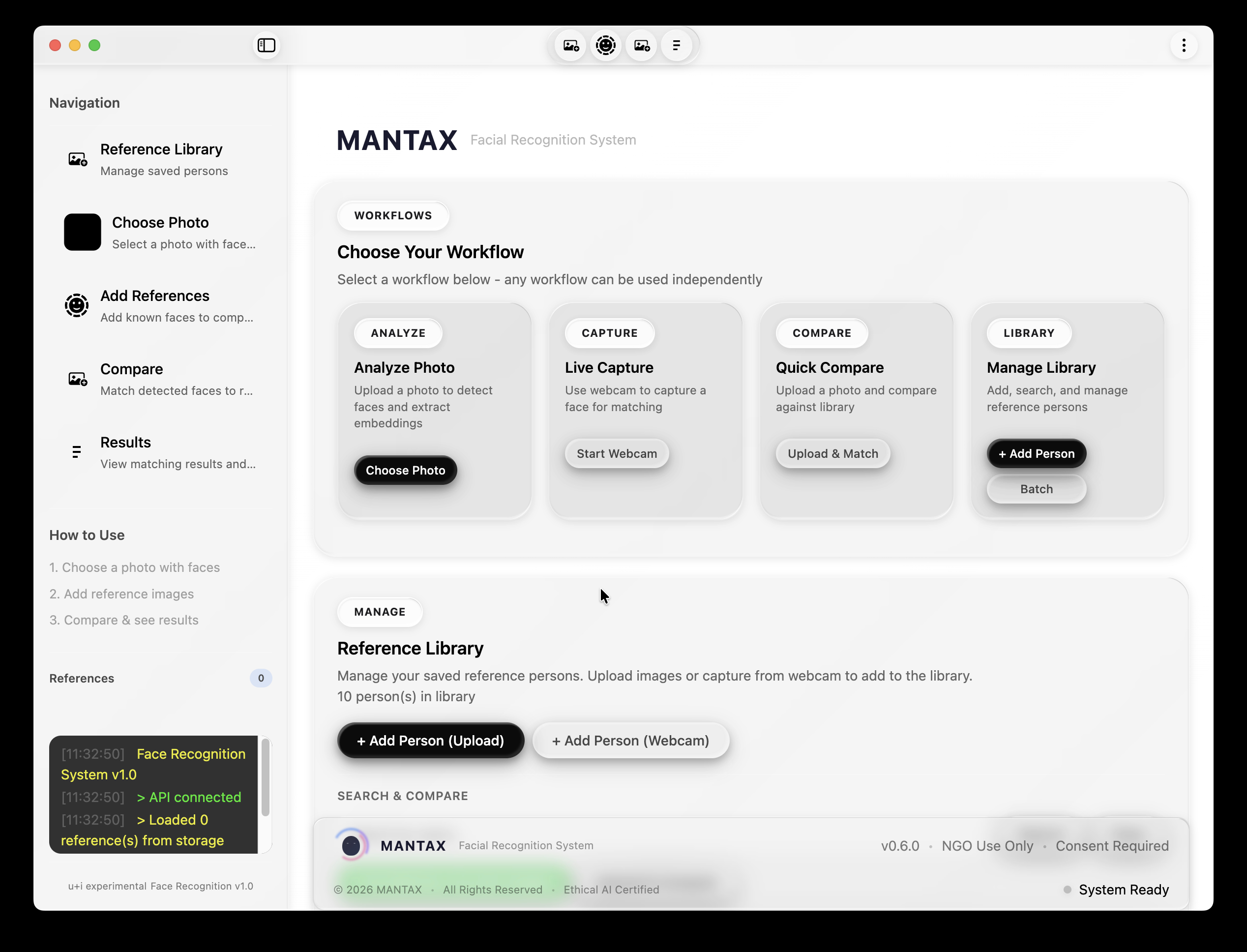

Building MANTAX: An Ethical Facial Recognition System for NGOs

#Introduction

This is a technical deep-dive into MANTAX, an ethical facial recognition system designed for NGO use cases. In this blogpost, we'll explore the complete architecture, data flows, and implementation details—everything a developer needs to understand how this system works.

#System Architecture Overview

MANTAX follows a three-tier architecture with clear separation between presentation, business logic, and machine learning components.

#Technology Stack

| Layer | Technology | Purpose | |-------|------------|---------| | Frontend | Electron + Vanilla JS | Desktop app with custom titlebar | | Styling | SCSS → CSS | macOS Tahoe Liquid Glass design | | Backend | Flask (Python) | REST API with 31 endpoints | | ML Runtime | ONNX Runtime (ArcFace) + PyTorch (FaceNet) | Dual-model embedding extraction | | Face Detection | OpenCV DNN + MediaPipe | Face localization + 468-point landmarks |

#The Data Pipeline

When a user uploads an image, it flows through a well-defined pipeline. Let's trace this journey:

#Face Detection Module

The detection module (src/detection/__init__.py) handles the first critical step: finding where faces are in an image.

#Detection Flow

#Primary Detection Method: OpenCV DNN

The system uses a pre-trained Caffe model for deep learning-based face detection:

Model files required:

deploy.prototxt.txt- Caffe architecture definitionres10_300x300_ssd_iter_140000.caffemodel- Pre-trained weights

#Fallback: Haar Cascade

If DNN fails to load, the system gracefully falls back to Haar Cascade:

#Face ROI Extraction

Once faces are detected, the API extracts the region of interest (ROI):

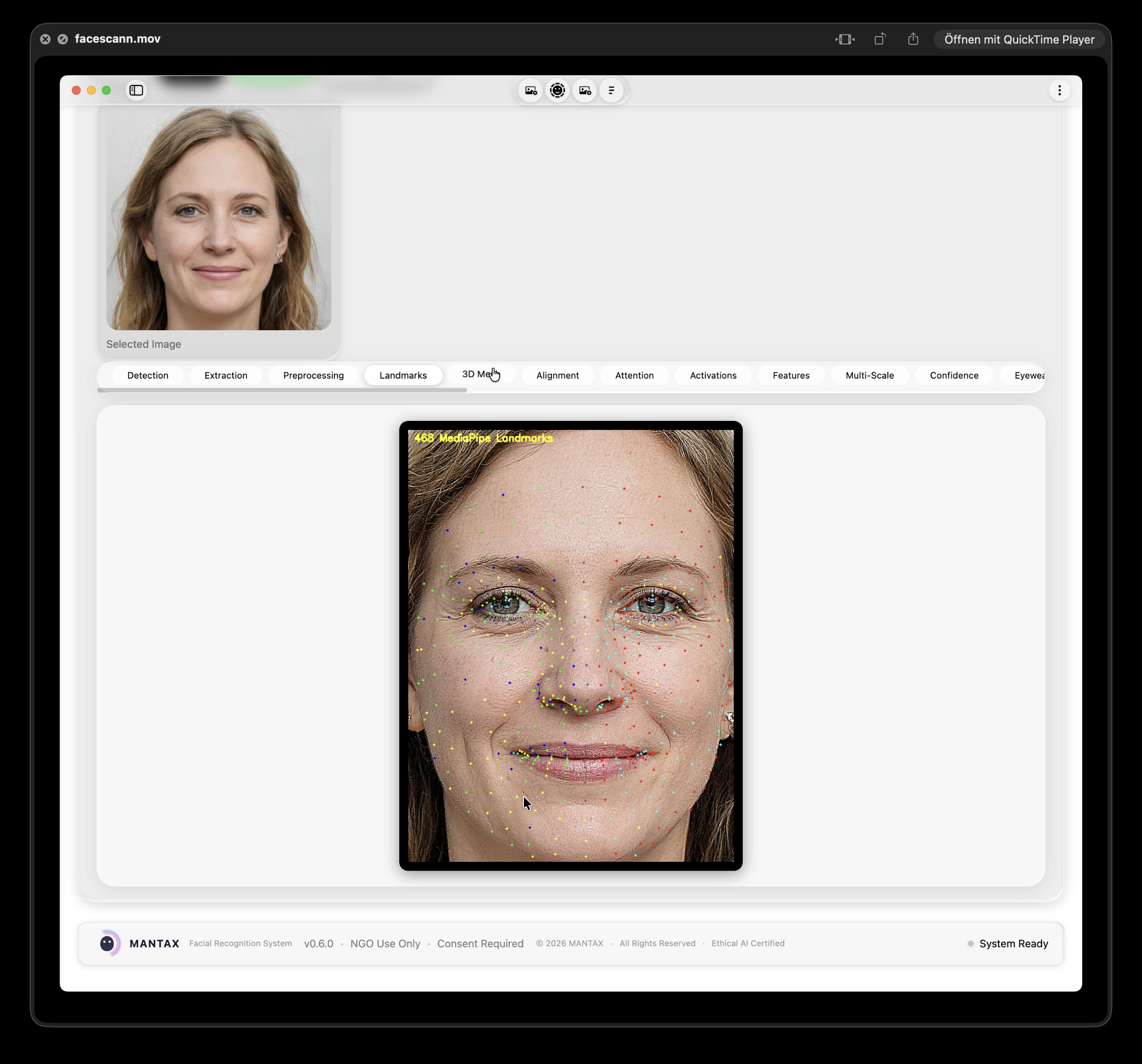

#Facial Landmark Detection

After detecting faces, the system extracts 468 facial landmarks using MediaPipe Face Mesh:

#Landmark Extraction Code

#Geometric Feature Extraction

For comparison purposes, we extract scale-invariant geometric features:

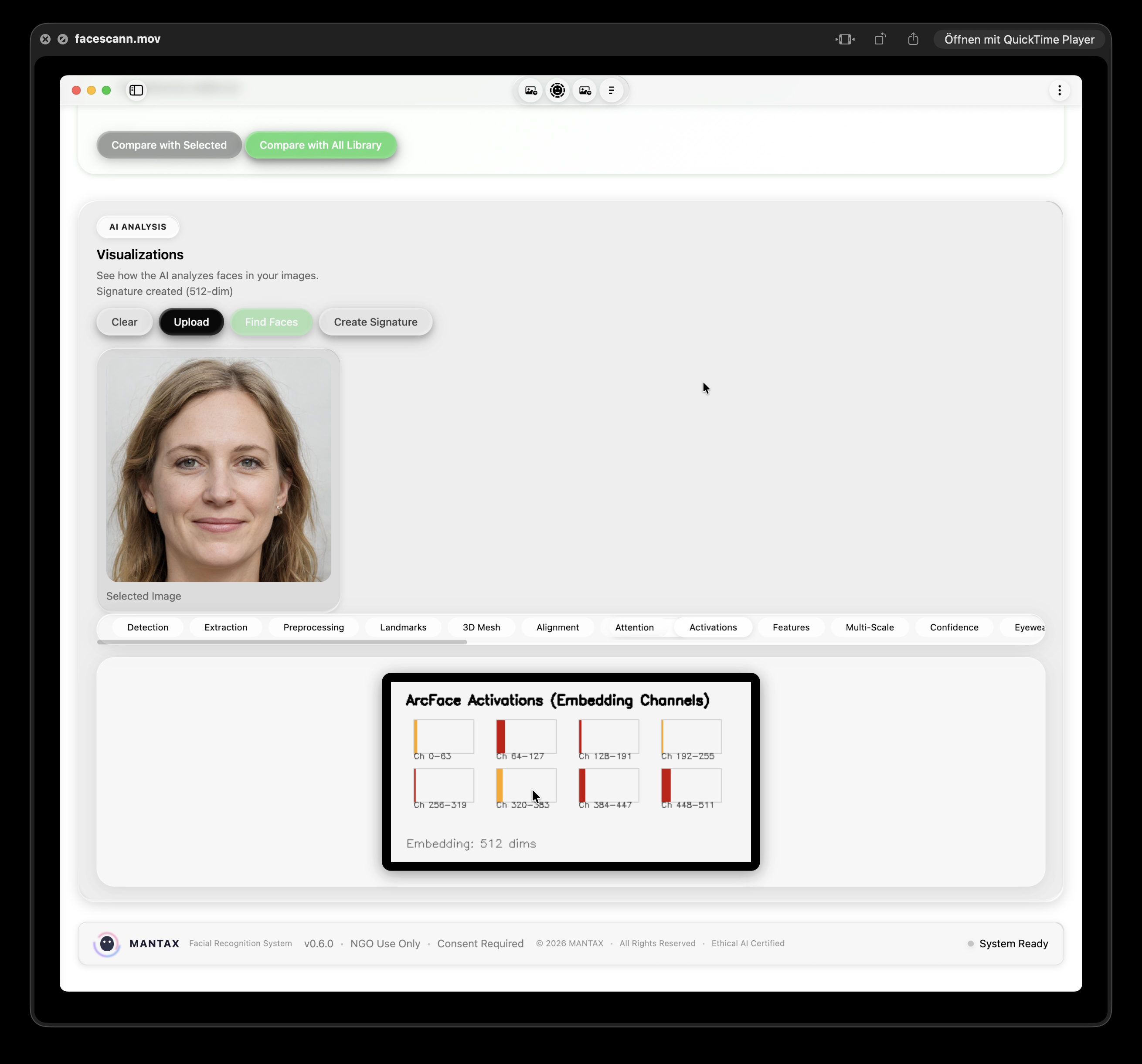

#Embedding Extraction (Dual-Model)

This is the core of the system—converting face images into mathematical embeddings that can be compared.

#ArcFace Implementation

ArcFace provides superior discrimination between different faces:

#FaceNet Implementation

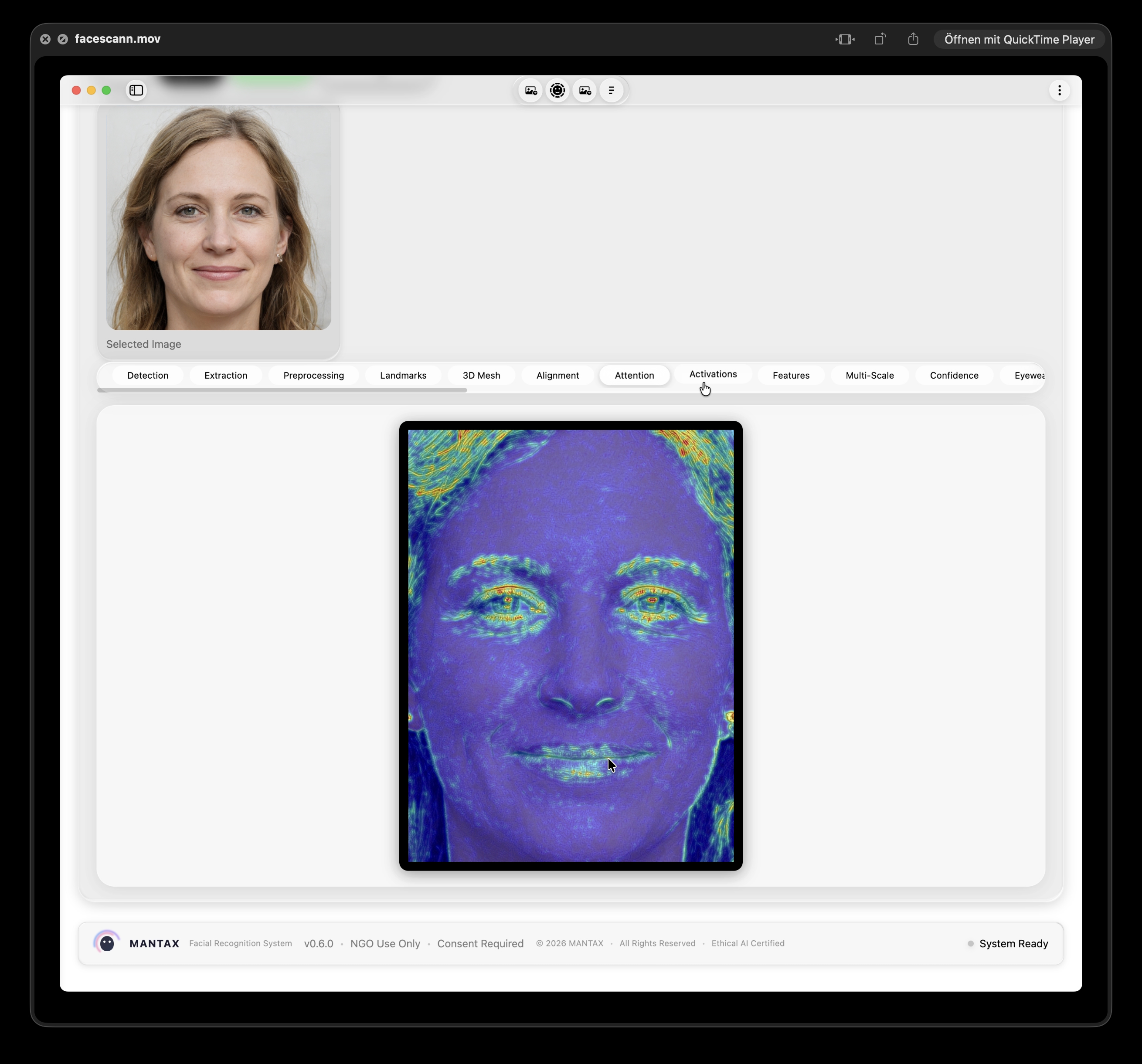

FaceNet provides secondary signals and neural activation visualizations:

#Neural Network Activations

FaceNet also extracts intermediate layer activations for visualization:

Activation layers extracted: | Layer | Output Shape | Purpose | |-------|-------------|---------| | conv1 | (64, 112, 112) | First convolutions | | bn1 | (64, 112, 112) | First batch norm | | layer1 | (64, 56, 56) | Low-level features | | layer2 | (128, 28, 28) | Mid-level features | | layer3 | (256, 14, 14) | High-level features | | layer4 | (512, 7, 7) | Final features | | embedding | (128,) | Final embedding |

#The Compare Endpoint (Complete Flow)

Here's the full comparison flow from the /api/compare endpoint:

#Dual-Model Scoring

The scoring combines multiple signals with learned weights:

#Confidence Bands

Rather than binary decisions, the system outputs confidence bands:

#API Endpoints Reference

The Flask API exposes 31 endpoints for all operations:

| Endpoint | Method | Purpose |

|----------|--------|---------|

| /api/health | GET | System health check |

| /api/embedding-info | GET | Current model info |

| /api/diagnostics | GET | System diagnostics |

| /api/detect | POST | Face detection |

| /api/extract | POST | Embedding extraction |

| /api/add-reference | POST | Add reference image |

| /api/references | GET | List all references |

| /api/references/<id> | DELETE | Remove reference |

| /api/compare | POST | Compare embeddings |

| /api/visualizations/<type> | GET | Get visualization |

| /api/clear | POST | Clear session |

#Visualizations (14 Types)

The system provides 14 different AI visualizations to help investigators understand why scores were computed:

#Visualization Implementation Example

#Session State Management

The API maintains in-memory session state:

#Persistence

References are saved to JSON for persistence across restarts:

#Testing Infrastructure

The system includes comprehensive tests:

#Running Tests

#File Structure

#Key Design Decisions

#1. Dual-Model Architecture

Using both ArcFace (512-dim) and FaceNet (128-dim) together provides better discrimination than either alone. ArcFace handles the primary matching while FaceNet provides secondary signals and activation visualizations.

#2. Confidence Bands, Not Binary Decisions

The system outputs confidence bands (Very High/High/Moderate/Insufficient) instead of "match/no-match". This ensures human investigators always make the final decision.

#3. Local-Only Processing

No images are sent to external servers. All computation happens on the user's machine, addressing NGO privacy concerns.

#4. Non-Reversible Embeddings

Facial embeddings cannot be used to reconstruct the original face—providing an additional layer of privacy protection.

#5. Consent Tracking

Every reference image includes metadata about consent status, source, and purpose—essential for NGO documentation requirements.

#Summary

MANTAX is a fully functional ethical facial recognition system built with:

- Flask API (2,131 lines) with 31 endpoints

- Dual-model embedding (ArcFace 512-dim + FaceNet 128-dim)

- OpenCV DNN face detection with MediaPipe landmarks

- Electron desktop app with macOS Tahoe Liquid Glass UI

- Comprehensive testing (E2E, edge cases, frontend)

The system is designed for NGO use cases with:

- Local-only processing (no cloud)

- Human-in-the-loop verification

- Consent tracking

- Confidence bands instead of binary decisions

In the next blogpost, we'll explore the JavaScript refactoring journey—how we tackled a 3,429-line monolithic app.js and broke it into 7 modular files following best practices.

#Demo Video

Here's a demo showing a no-match scenario:

Next: The JavaScript Refactoring Story